In the last couple of posts, we’ve looked at ways to copy scalar properties from one object to another, with the high performance of explicit assignments and the low maintenance of reflection.

The approach of creating a lambda to operate on the object instance as a whole shows a lot of promise. For example, we may want to compare two object instances property-by-property, and the code for that would look quite similar.

public static class Comparer

{

public static bool Compare<T>(this T source, T destination) { return CompareClosure<T>.Compare(source, destination); }

private static class CompareClosure<T>

{

private static Func<T, T, bool> BuildComparerLambda(Func<PropertyInfo, bool> propertyFilter = null)

{

propertyFilter = propertyFilter ?? (_ => true);

var sourceParameterExpression = Expression.Parameter(typeof(T), "_left");

var destinationParameterExpression = Expression.Parameter(typeof(T), "_right");

var properties = typeof(T).GetProperties().Where(propertyFilter);

var expressions = properties.Select(_pi => Expression.Equal(Expression.Property(destinationParameterExpression, _pi), Expression.Property(sourceParameterExpression, _pi)));

var conjoinedExpression = expressions.Aggregate<Expression, Expression>(Expression.Constant(true), Expression.AndAlso);

var lambdaExpression = Expression.Lambda<Func<T, T, bool>>(conjoinedExpression, sourceParameterExpression, destinationParameterExpression);

return lambdaExpression.Compile();

}

private static readonly Func<T, T, bool> _compare = BuildComparerLambda();

public static bool Compare(T source, T destination) { return _compare(source, destination); }

}

}

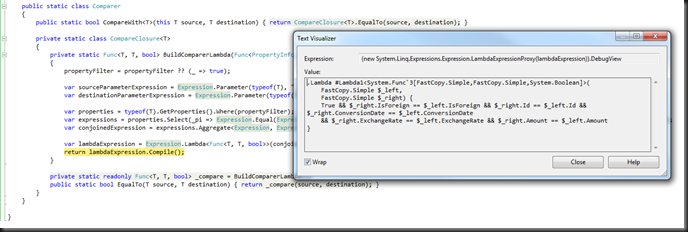

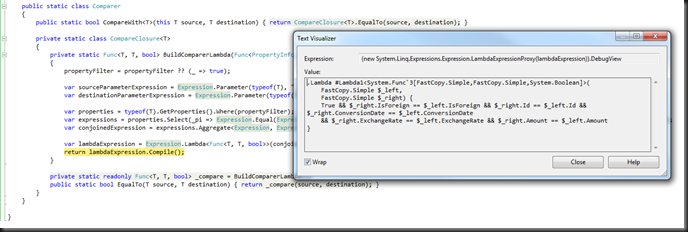

which gives us:

What are are doing in the code is mapping each property to a boolean value indicating whether the two instances have the same value for the property, and then folding that set of boolean values to a single boolean value by aggregating it with the && operator (and a seed value of ‘true’).

We can readily see a pattern emerging here:

There are two types of operations possible for a given arity. In the case of Compare and Copy where the operation arity is 2, we can create either an Action<T, T> or a Func<T, T, TResult>.

The Action<> case is a lambda that performs an operation on each property with reference to the given argument instances.

We can create a general operation builder by lifting a parameter which specifies the desired operation on a property, with reference to the lambda arguments.

public static Action<T, T> BuildOperationAction<T>(

Func<PropertyInfo, ParameterExpression, ParameterExpression, Expression> operationExpressionFactory)

{

var leftParameterExpression = Expression.Parameter(typeof (T), "_left");

var rightParameterExpression = Expression.Parameter(typeof (T), "_right");

var properties = typeof (T).GetProperties();

var expressions = properties.Select(_ => operationExpressionFactory(_, leftParameterExpression, rightParameterExpression));

var blockExpression = Expression.Block(expressions);

var lambdaExpression = Expression.Lambda<Action<T, T>>(blockExpression, leftParameterExpression, rightParameterExpression);

return lambdaExpression.Compile();

}

The Func<> case is a lambda that maps the set of properties to a set of values with reference to the argument instances, and then aggregates this set of values to a single value using an aggregator.

We can create a general operation builder by lifting:

- a parameter which specifies the desired operation on a property, with reference to the lambda arguments

- a parameter which specifies the aggregate operation to fold the set of computed values to a single value, and

- a parameter specifying the initial value for the aggregator.

Thusly:

public static Func<T, T, TResult> BuildOperationFunc<T, TResult>(

Func<PropertyInfo, ParameterExpression, ParameterExpression, Expression> operationExpressionFactory,

Func<Expression, Expression, Expression> conjunction, Expression seed)

{

var leftParameterExpression = Expression.Parameter(typeof (T), "_left");

var rightParameterExpression = Expression.Parameter(typeof (T), "_right");

var properties = typeof (T).GetProperties();

var expressions = properties.Select(_ => operationExpressionFactory(_, leftParameterExpression, rightParameterExpression));

var joinedExpression = expressions.Aggregate(seed, conjunction);

var lambdaExpression = Expression.Lambda<Func<T, T, TResult>>(joinedExpression, leftParameterExpression, rightParameterExpression);

return lambdaExpression.Compile();

}

This allows us to implement Copy() and Compare() as follows:

public static bool CompareWith<T>(this T source, T destination) { return CompareClosure<T>.Compare(source, destination); }

private static class CompareClosure<T>

{

private static readonly Func<T, T, bool> _compare =

BuildOperationFunc<T, bool>(

(_pi, _src, _dest) => Expression.Assign(Expression.Property(_dest, _pi), Expression.Property(_src, _pi)),

Expression.AndAlso,

Expression.Constant(true));

public static bool Compare(T source, T destination) { return _compare(source, destination); }

}

public static void CopyTo<T>(this T source, T destination) { CopyClosure<T>.Copy(source, destination); }

private static class CopyClosure<T>

{

private static readonly Action<T, T> _copy =

BuildOperationAction<T>(

(_pi, _src, _dest) => Expression.Assign(Expression.Property(_dest, _pi), Expression.Property(_src, _pi)));

public static void Copy(T source, T destination) { _copy(source, destination); }

}

Very neat.

We could develop more operations than Copy and Compare, building fast, automatically maintained mappers for data access (think ORMs) and UI field access, by simply specifying the equivalent expression. The development effort is simply in the development of the expression.

For example, here is a mapper which maps properties defined on type T, from a dynamic source object to backing fields on a target object of type T:

private static readonly Action<T, T> _copyDynamicToStaticBackingFields =

BuildOperationAction<T>(

(_pi, _src, _dest) =>

{

var sourceBinder = RuntimeBinder.GetMember(CSharpBinderFlags.InvokeSpecialName, _pi.Name, typeof (T), new [ ] { ThisArgument });

var sourceGetExpression = Expression.Convert(Expression.Dynamic(sourceBinder, typeof (object), _src), _pi.PropertyType);

var fieldName = String.Format("_{0}{1}", _pi.Name.Substring(0, 1).ToLower(), _pi.Name.Substring(1));

var fieldInfo = typeof (T).GetField(fieldName, BindingFlags.Instance | BindingFlags.NonPublic);

if (fieldInfo == null) return Expression.Default(typeof (void));

var destinationAccessExpression = Expression.Field(_dest, fieldInfo);

return Expression.Assign(destinationAccessExpression, sourceGetExpression);

});

We could further develop this approach by developing the BuildOperationXXX functions for Action<>s and Func<>s of arity 1, which would allow us to quickly develop fast, low-maintenance object printers and serializers.

I believe that this set of generating functions is likely to be more generally useful, so I’ve created a CodePlex project for this. Please visit it and let me know how you used it!